[1] 4 2 [,1] [,2] [,3] [,4] [,5] [,6] [,7] [,8] [,9] [,10]

X1 3 2 3 6 1 1 3 1 4 2

X2 1 1 2 5 4 3 1 2 3 2[1] 4 3 5 11 5 4[1] 0.9167NYU Applied Statistics for Social Science Research

\[ \DeclareMathOperator{\E}{\mathbb{E}} \DeclareMathOperator{\P}{\mathbb{P}} \DeclareMathOperator{\V}{\mathbb{V}} \DeclareMathOperator{\L}{\mathscr{L}} \DeclareMathOperator{\I}{\text{I}} \DeclareMathOperator{\d}{\mathrm{d}} \]

Supposed you forgot the law of iterated expectations (Adam’s Law) which states \[ \E(Y) = E_X(E_{Y|X}(Y \mid X)) \]

Use the following AI prompts

Note

“Machine learning excels when you have lots of training data that can be reasonably modeled as exchangeable with your test data; Bayesian inference excels when your data are sparse and your model is dense.” — Andrew Gelman

Classify each of the following: {decision, parameter inference, prediction, causal inference}

Question

Which category is missing data imputation?

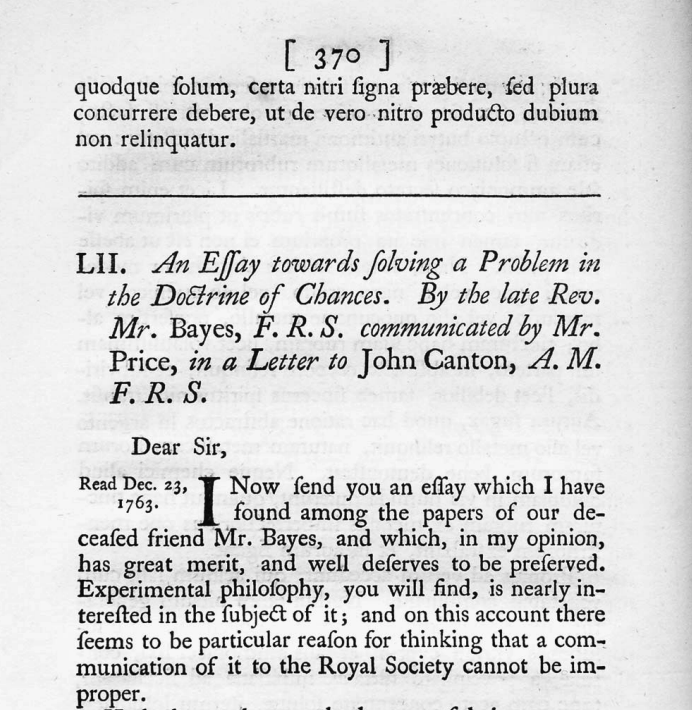

Summary of the book The Theory That Would Not Die

We may regard the present state of the universe as the effect of its past and the cause of its future. An intellect which at any given moment knew all of the forces that animate nature and the mutual positions of the beings that compose it, if this intellect were vast enough to submit the data to analysis, could condense into a single formula the movement of the greatest bodies of the universe and that of the lightest atom; for such an intellect nothing could be uncertain, and the future just like the past would be present before its eyes.

Marquis Pierre Simon de Laplace (1729 — 1827)

“Uncertainty is a function of our ignorance, not a property of the world.”

Left: Pierre Simon Laplace in the style of Wassily Kandinsky, by OpenAI DALL·E

Timonen, J., Mannerström, H., Vehtari, A., & Lähdesmäki, H. (2021)

sample() function to simulate rolls of a die and replicate() function to repeat the rolling process many times[1] 4 2 [,1] [,2] [,3] [,4] [,5] [,6] [,7] [,8] [,9] [,10]

X1 3 2 3 6 1 1 3 1 4 2

X2 1 1 2 5 4 3 1 2 3 2[1] 4 3 5 11 5 4[1] 0.9167mean() does the averageWarning

There is something sublte going on: a difference between generating 100 draws from one random variable \(Y\) vs getting one draw from 100 \(Y\)s

This is a more direct demonstration of random draws from a distribution of \(Y = X_1 + X_2\)

\[ F_{X,Y}(x, y) \geq 0 \quad \text{for all } x, y \]

\[ F_{X,Y}(x, y) = \int_{-\infty}^x \int_{-\infty}^y f_{X,Y}(u, v) \, \mathrm{d}v \, \mathrm{d}u \]

\[ \int_{-\infty}^\infty \int_{-\infty}^\infty f_{X,Y}(x, y) \, \mathrm{d}x \, \mathrm{d}y = 1 \]

\[ f_{X,Y}(x, y) = \frac{\partial^2}{\partial x \partial y} F_{X,Y}(x, y) \]

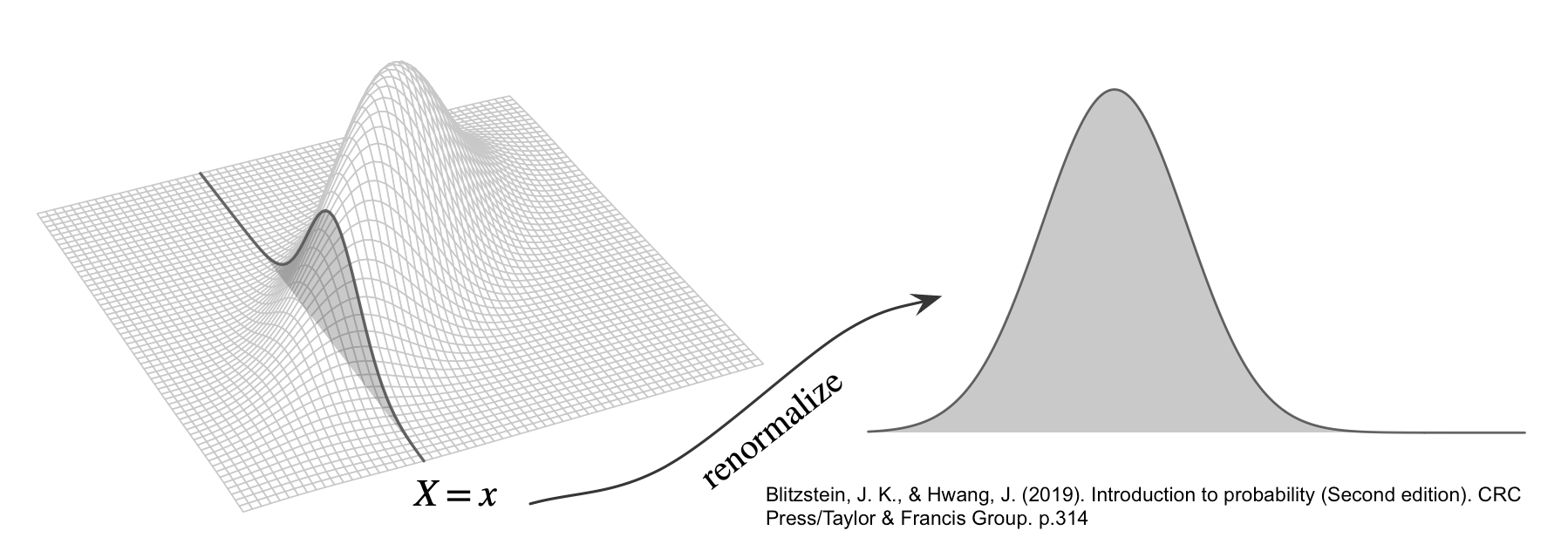

\[ f_Y(y) = \int_{-\infty}^\infty f_{X,Y}(x, y) \, \mathrm{d}x. \]

\[ F_{Y \mid X}(y \mid x) = \P(Y \leq y \mid X = x) \]

\[ f_{X,Y}(x, y) = \frac{1}{2 \pi \sqrt{\det(\Sigma)}} \exp\left( -\frac{1}{2} \begin{bmatrix} x - \mu_X \\ y - \mu_Y \end{bmatrix}^\top \Sigma^{-1} \begin{bmatrix} x - \mu_X \\ y - \mu_Y \end{bmatrix} \right) \]

library(distributional)

sigma_X <- 1

sigma_Y <- 2

rho <- 0.3 # Correlation coefficient

rho_sigmaXY <- rho * sigma_X * sigma_Y # why?

cov_matrix <- matrix(c(sigma_X^2, rho_sigmaXY,

rho_sigmaXY, sigma_Y^2), 2, 2)

dmv_norm <- dist_multivariate_normal(mu = list(c(0, 0)),

sigma = list(cov_matrix))

dimnames(dmv_norm) <- c("x", "y")

variance(dmv_norm) x y

[1,] 1 4[[1]]

x y

[1,] 1.0 0.6

[2,] 0.6 4.0[1] 0[1] 0.93List of 1

$ : num [1:10000, 1:2] 0.469 0.46 -1.346 -0.567 1.239 ...

..- attr(*, "dimnames")=List of 2

.. ..$ : NULL

.. ..$ : chr [1:2] "x" "y"# A tibble: 6 × 2

x y

<dbl> <dbl>

1 0.469 -0.775

2 0.460 1.20

3 -1.35 -0.523

4 -0.567 -0.738

5 1.24 0.0800

6 -0.664 -4.45 x y

0.00 0.03 x y

x 1.02 0.61

y 0.61 3.98 x y

x 1.0 0.3

y 0.3 1.0[1] 0.93[1] 0.8How would we do the above analytically? \[ \P(X < 0 \cup Y < 1) = \P(X < 0) + \P(Y < 1) - \P(X < 0 \cap Y < 1) \]

# Compute P(X < 0)

p_X_less_0 <- cdf(dist_normal(mu = 0, sigma = sigma_X), 0) # pnorm(0, mean = 0, sd = sigma_X)

# Compute P(Y < 1)

p_Y_less_1 <- cdf(dist_normal(mu = 0, sigma = sigma_Y), 1) # pnorm(1, mean = 0, sd = sigma_Y)

# Compute P(X < 0, Y < 1)

p_X_less_0_and_Y_less_1 <- cdf(dmv_norm, cbind(0, 1))

# P(X < 0 or Y < 1)

(p_X_less_0 + p_Y_less_1 - p_X_less_0_and_Y_less_1) |> round(2)[1] 0.8mean(draws$x < 0 | draws$y < 1) or the analytical solution?Let \(A\) be a partition of \(\Omega\), so that each \(A_i\) is disjoint, \(\P(A_i >0)\), and \(\cup A_i = \Omega\). \[ \P(B) = \sum_{i=1}^{n} \P(B \cap A_i) = \sum_{i=1}^{n} \P(B \mid A_i) \P(A_i) \]

Image from Blitzstein and Hwang (2019), Page 55

\[ \P(A \mid B) = \frac{\P(A \cap B)}{\P(B)} = \frac{\P(B \mid A) \P(A)}{\sum_{i=1}^{n} \P(B \mid A_i) \P(A_i)} \]

\[ \underbrace{\color{blue}{f_{\Theta|Y}(\theta \mid y)}}_{\text{Posterior}} = \frac{ \overbrace{ \color{red}{f_{Y|\Theta}(y \mid \theta)} }^{\text{Likelihood}} \cdot \overbrace{\color{green}{f_\Theta(\theta)}}^{ \text{Prior} } }{ \underbrace{\int_{-\infty}^{\infty} f_{Y|\Theta}(y \mid \theta) f_\Theta(\theta) \, \text{d}\theta}_{\text{Prior Predictive } f(y)} } \propto \color{red}{f_{Y|\Theta}(y \mid \theta)} \cdot \color{green}{f_\Theta(\theta)} \]

For a more complete workflow, see Bayesian Workflow by Gelman et al. (2020)

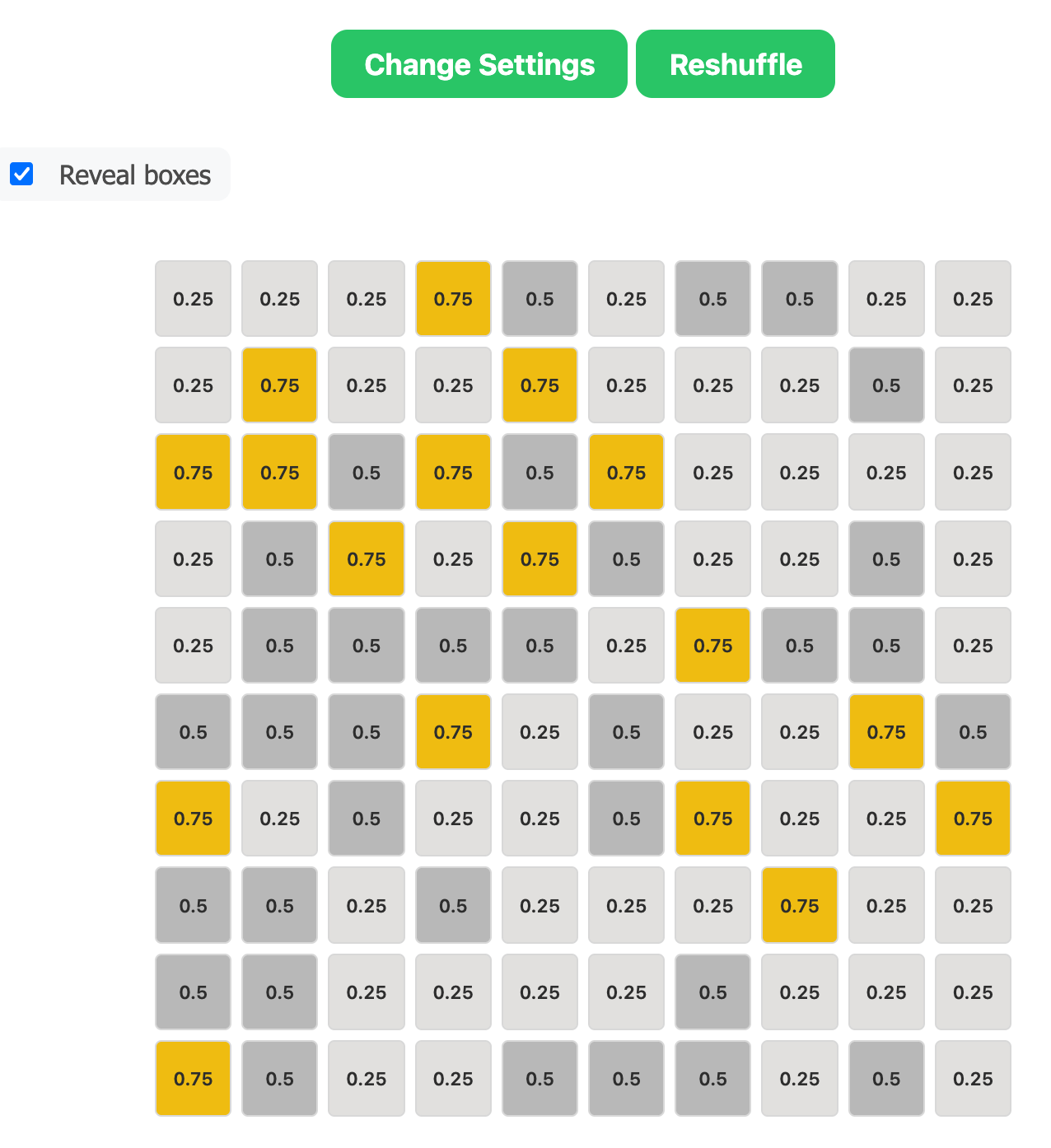

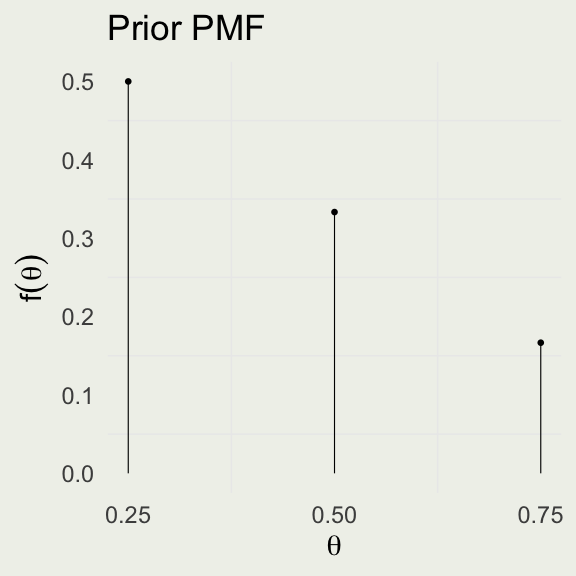

\[ \begin{eqnarray*} \P(\text{red}) &=& \sum_{i=1}^{3} f(\text{red},\ \theta_i) = \sum_{i=1}^{3} f(\text{red} \mid \theta_i) \cdot f(\theta_i) \\ &=& 0.25 \cdot \left(\frac{1}{2}\right) + 0.50 \cdot \left(\frac{1}{3}\right) + 0.75 \cdot \left(\frac{1}{6}\right) \approx 0.42 \end{eqnarray*} \]

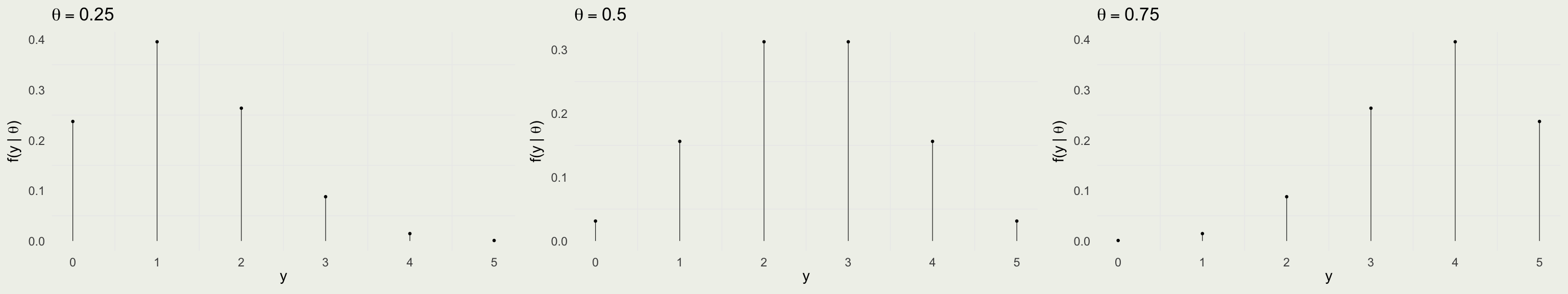

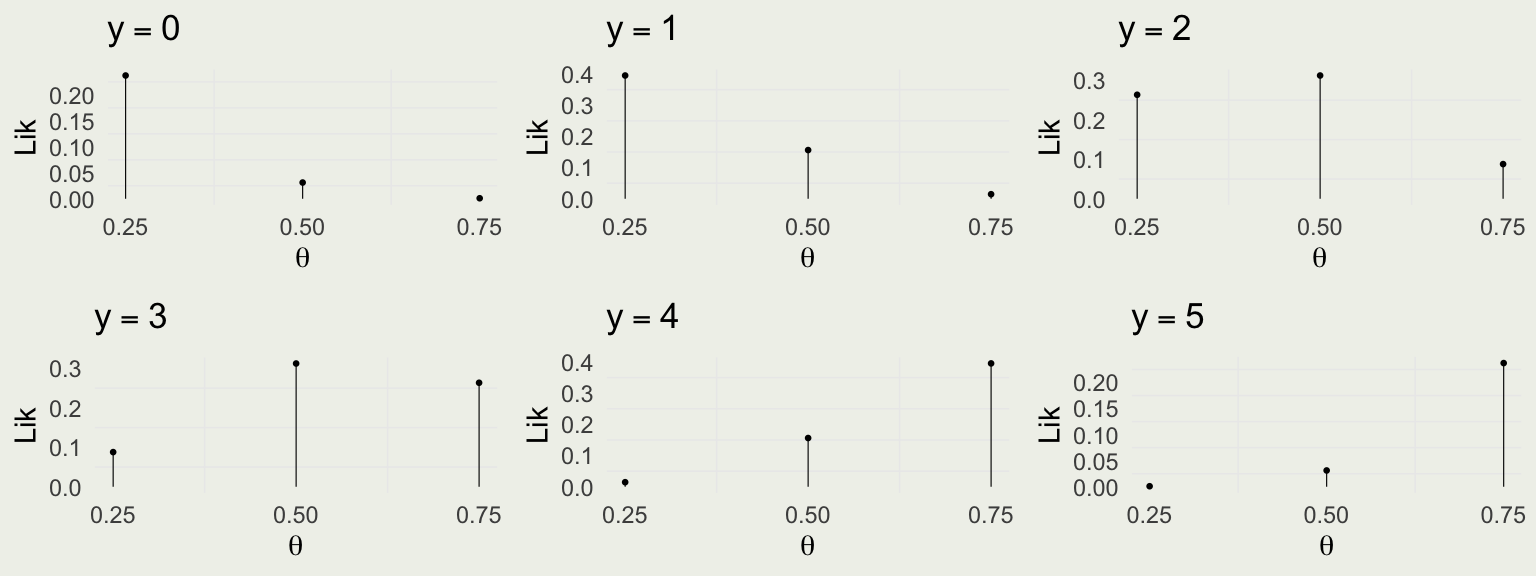

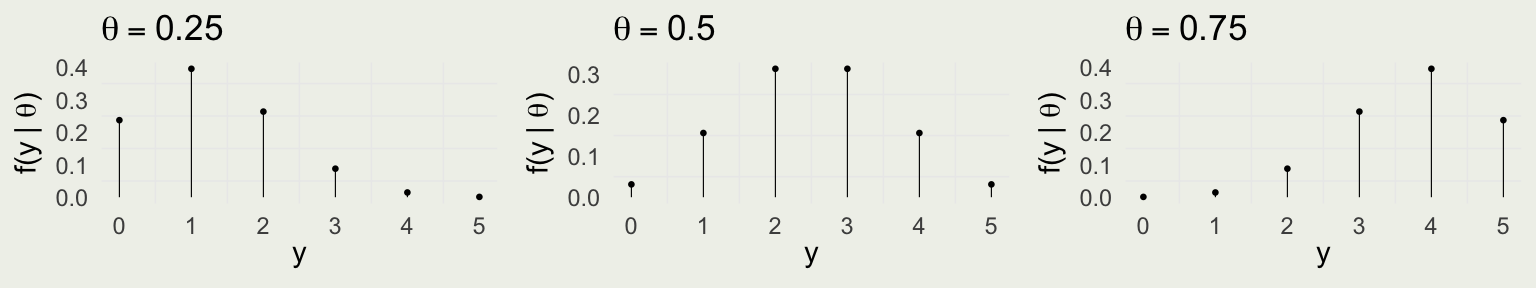

Take red ball as success, so \(y\) successes out of \(N = 5\) trials \[ y | \theta \sim \text{Bin}(N,\theta) = \text{Bin}(5,\theta) \]

\(f(y \mid \theta) = \text{Bin} (y \mid 5,\theta) = \binom{5}{y} \theta^y (1 - \theta)^{5 - y}\) for \(y \in \{0,1,\ldots,5\}\)

Is this a valid probability distribution as a function of \(y\)?

Compare with the data model:

\[ \begin{eqnarray*} f(\theta \mid y) &=& \frac{f(y \mid \theta) f(\theta)}{f(y)} = \frac{f(y \mid \theta) f(\theta)}{\sum_{i=1}^{3} f(y, \, \theta_i)} = \frac{f(y \mid \theta) f(\theta)}{\sum_{i=1}^{3} f(y \mid \theta_i) f(\theta_i)} \\ &\propto& f(y \mid \theta) f(\theta) \propto \theta^2 (1 - \theta)^{3} \cdot f(\theta) \end{eqnarray*} \]

| theta | prior | lik | lxp | post |

|---|---|---|---|---|

| 0.25 | 0.50 | 0.26 | 0.13 | 0.53 |

| 0.5 | 0.33 | 0.31 | 0.10 | 0.42 |

| 0.75 | 0.17 | 0.09 | 0.01 | 0.06 |

| Total | 1.00 | 0.66 | 0.25 | 1.00 |

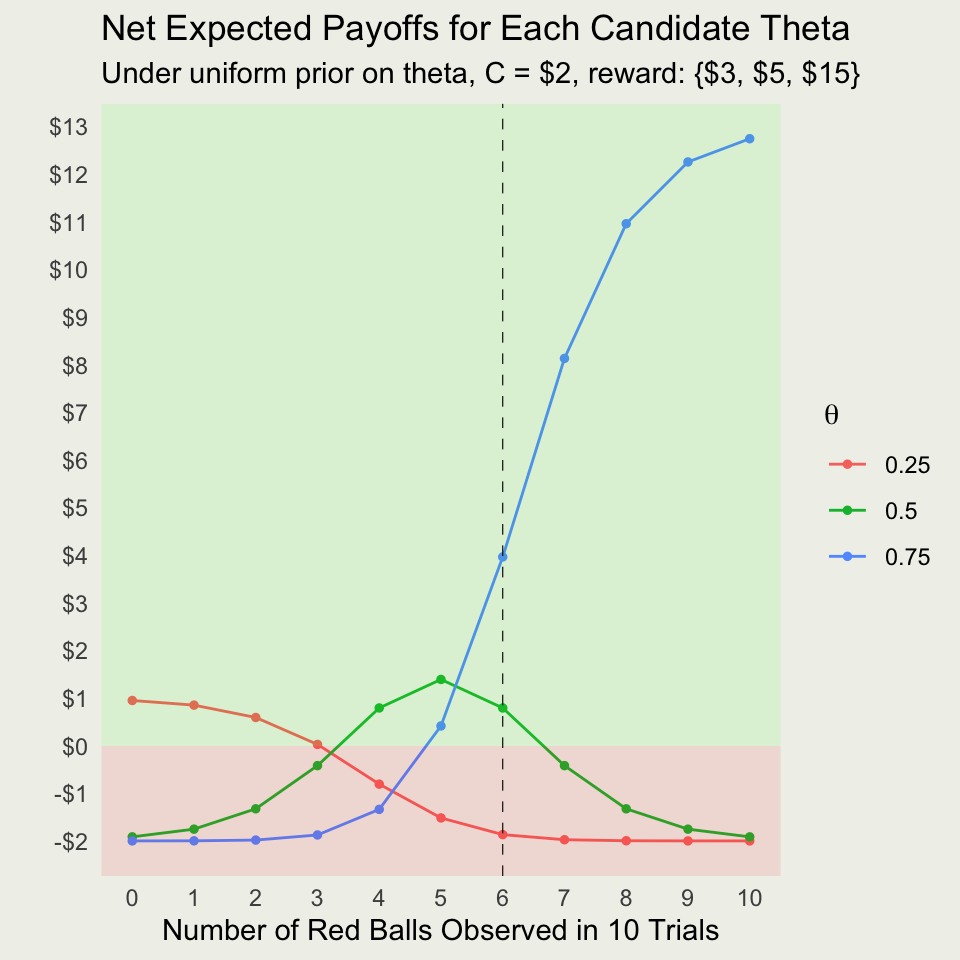

\[ \theta^* = \textrm{arg max}_{\theta} \left\{ x_i \times f(\theta_i \mid y) - \text{Cost} \right\} \]

The Conflict

The bet on the most probable outcome (MAP estimate of \(\theta=0.25\) at 53%) is suboptimal!

Because of the asymmetric payoffs, we bet on \(\theta=0.50\) despite it having a lower posterior probability (42%).

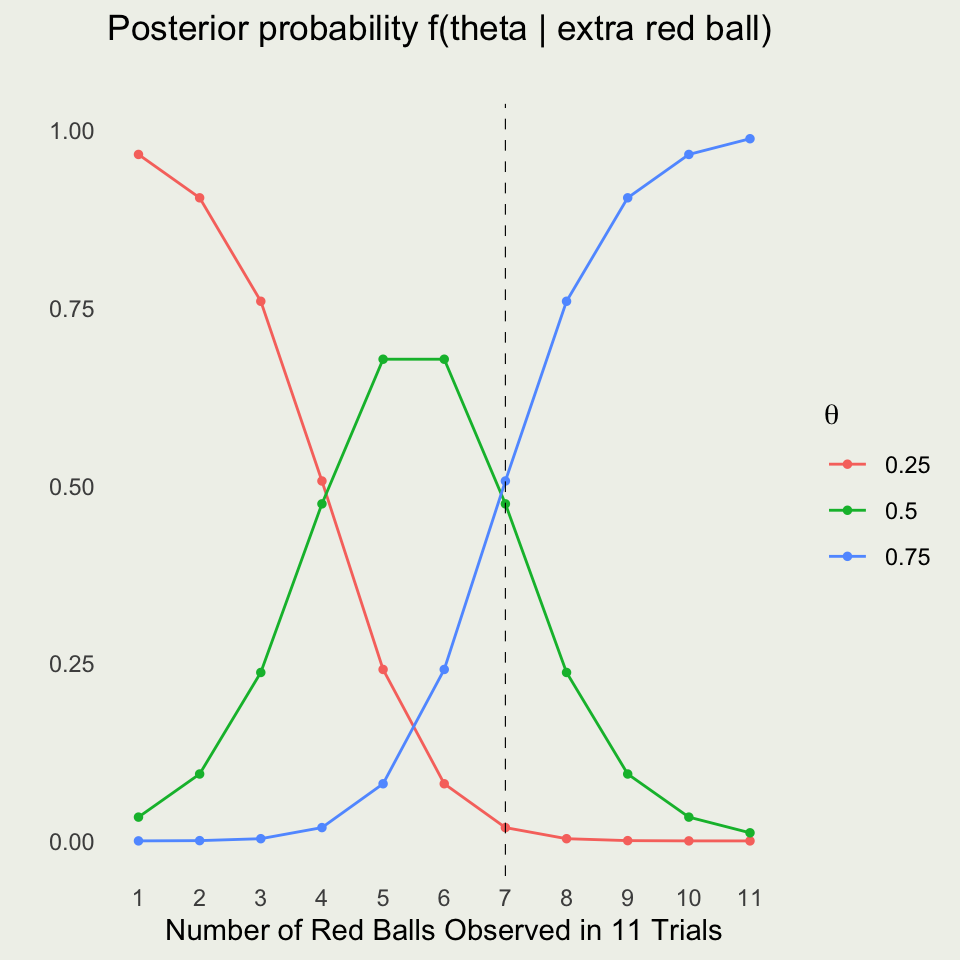

\[ \begin{eqnarray*} \P(\tilde{y} = \text{red} \mid y) &=& \sum_{i=1}^{3} f(\tilde{y} = \text{red}, \ \theta_i \mid y) = \sum_{i=1}^{3} f(\tilde{y} = \text{red} \mid \theta_i, \ y) f(\theta_i \mid y) \\ &=& \sum_{i=1}^{3} f(\tilde{y} = \text{red} \mid \theta_i) f(\theta_i \mid y) \\ \P(\tilde{y} = \text{red} \mid y) &=& 0.25 \times 0.526 + 0.50 \times 0.416 + 0.75 \times 0.058 \\ &\approx& 0.383 \end{eqnarray*} \]

Combine the two cases:

\[ \begin{align*} \E_\text{extra_ball}({\text{payoff}}) =& \P(\text{red})\times7.593 + \P(\text{green})\times3.600 \\ =& 0.589 \times 7.593 + 0.411 \times 3.600 \approx 5.95 \end{align*} \]

Net Expected Payoff (including costs):

\[ \E_\text{extra_ball}({\text{payoff}}) = 5.95 - 3 = \$2.95. \]

Without the extra ball: Net EV \(\approx \$3.96\).

With the extra ball: Net EV \(\approx \$2.95\).

Decision: It is better to pay $2 and play immediately rather than buying an extra ball.